dashboards that build themselves break every assumption a dashboard tool makes.

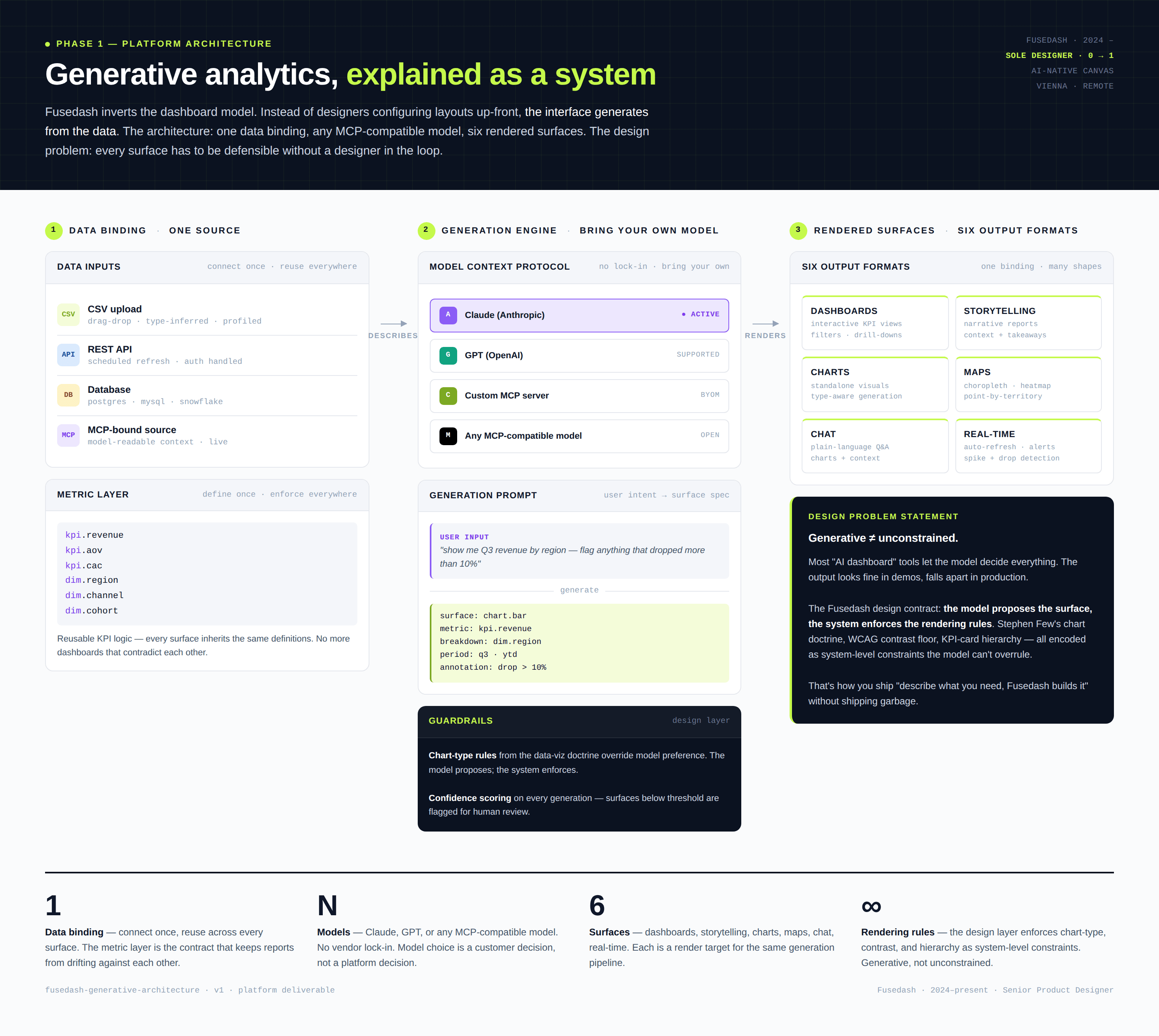

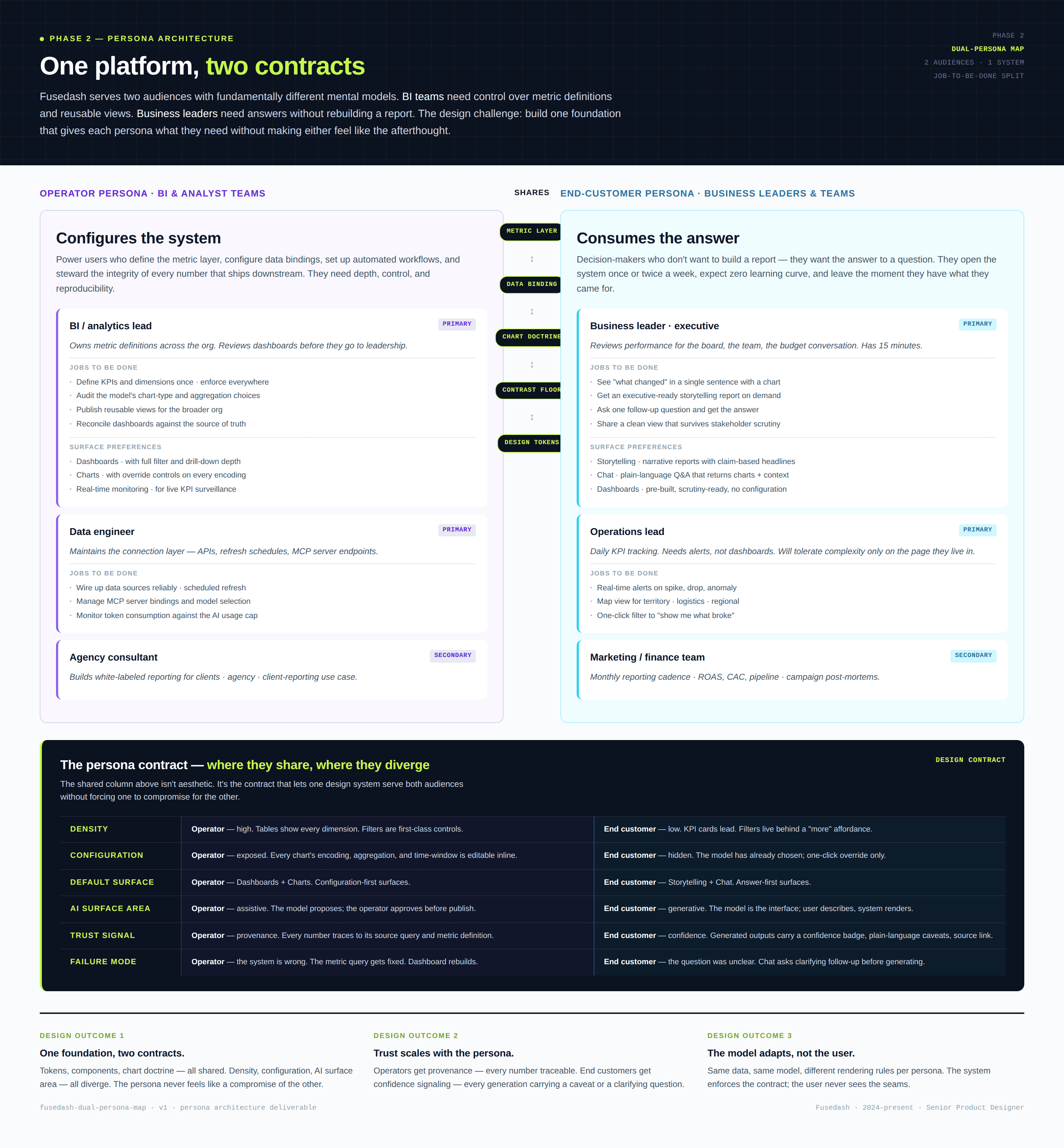

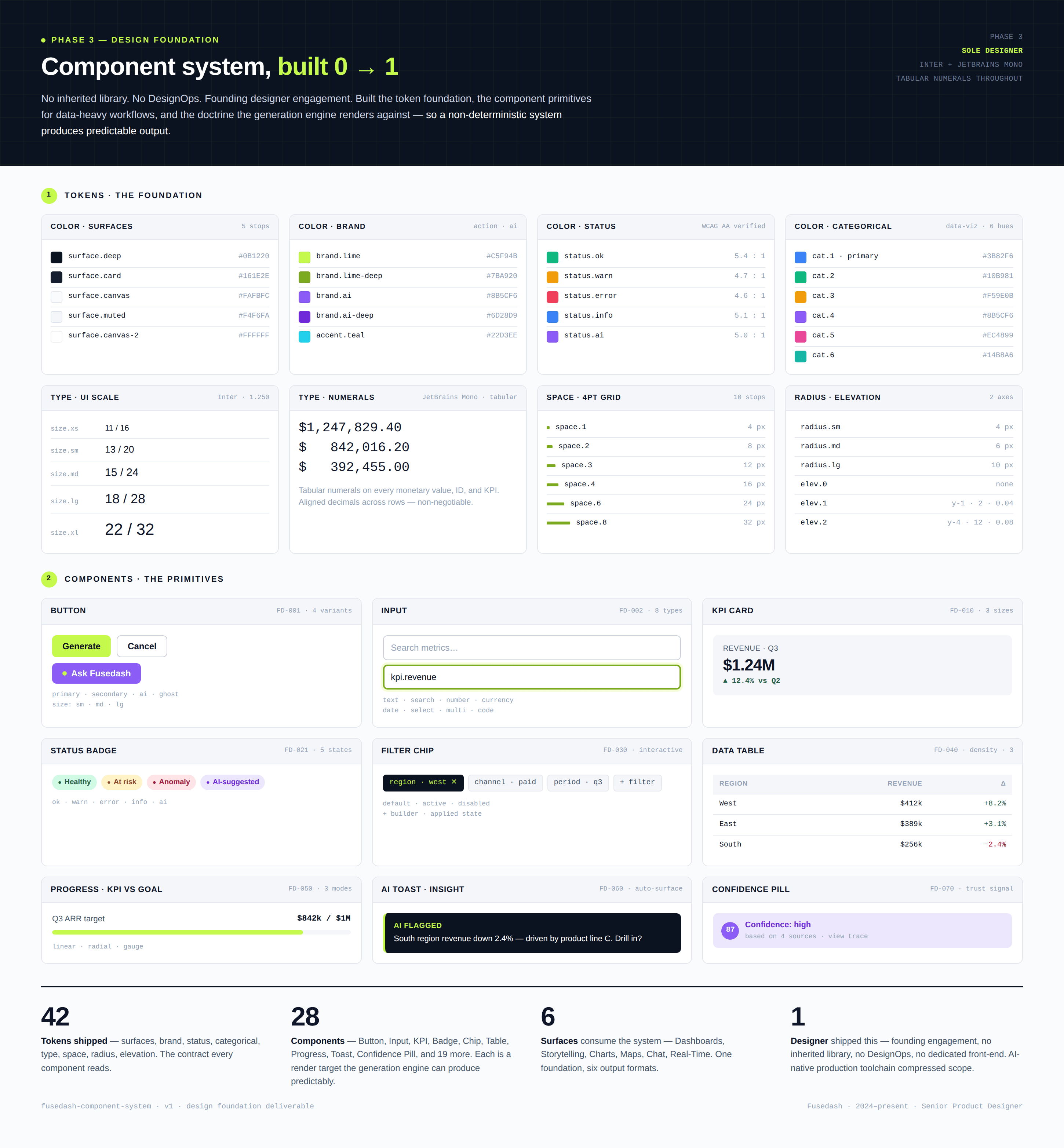

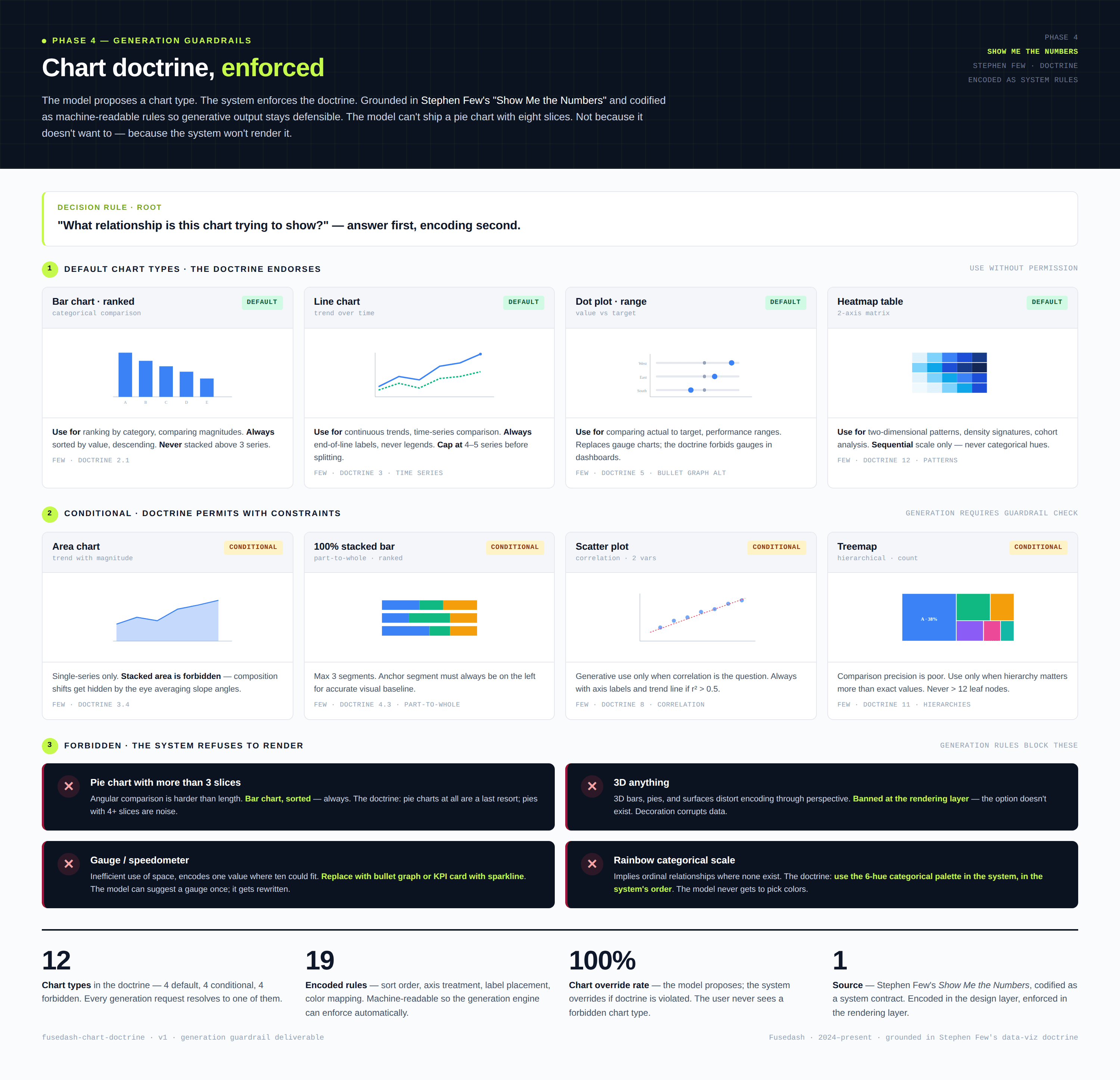

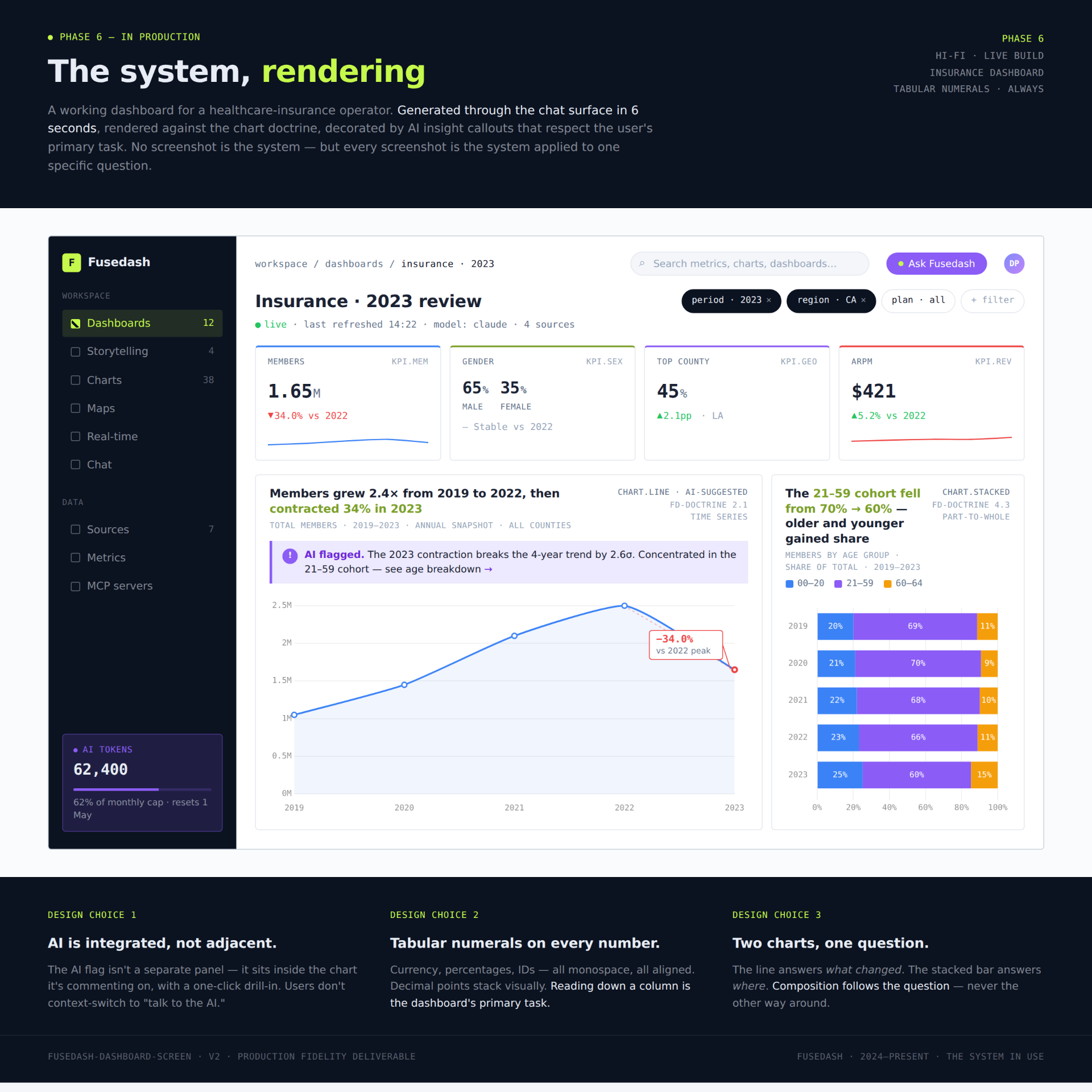

Fusedash is a generative analytics platform — upload a CSV, connect a REST API, or link any MCP-compatible model and the canvas generates KPI dashboards, AI charts, choropleth and heatmap maps, plain-language data chat, and real-time monitoring views. The product is built on the Model Context Protocol — customers bring their own AI model, no lock-in, no per-vendor dependency. Pricing runs on token packs for AI-powered actions: chart generation, summaries, conversational queries.

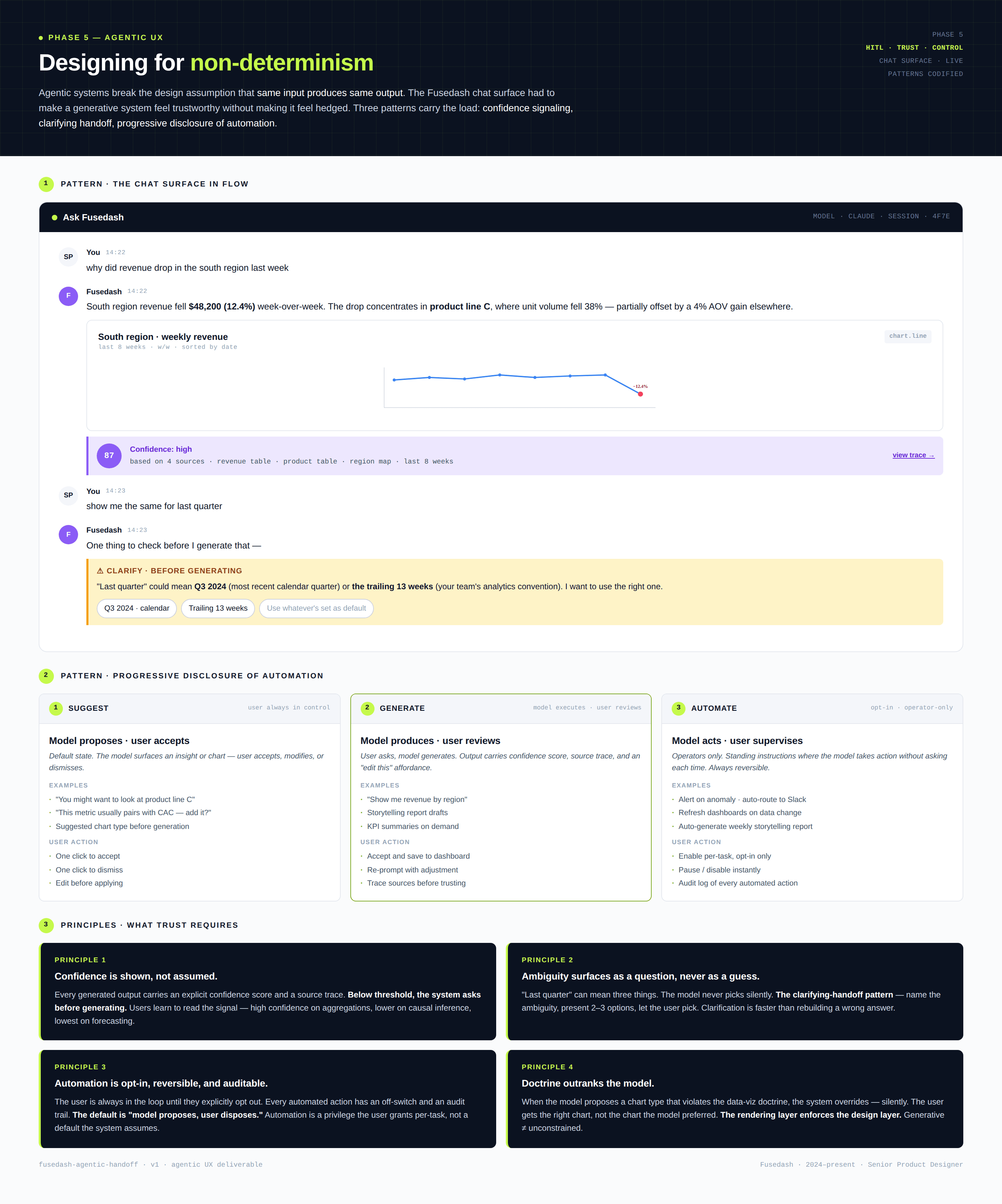

That product premise breaks the templates dashboard tools have leaned on for two decades. Fixed dashboards assume a designer or analyst pre-builds the view; Fusedash assumes the system builds it. Deterministic UI assumes one input produces one output; generative UI produces ranges of outputs that need to be ranked, edited, accepted, or rejected. The design problem isn't "lay out a dashboard" — it's "design the contract between a human, a non-deterministic agent, and a dataset where every interaction may produce something the user has never seen before."